Have a look at the trailer of the piece for dancer, sound and projection übersetzen – vertimas:

Sound optimized for headphone playback

technical details

The dancer is captured by a Kinect[ref name=”kinect”]Microsoft Kinectwww.xbox.com/kinect[/ref] Sensor, originally developed for Microsoft’s XBox 360.

The dancer is captured by a Kinect[ref name=”kinect”]Microsoft Kinectwww.xbox.com/kinect[/ref] Sensor, originally developed for Microsoft’s XBox 360.

This sensor device provides a depth videostream which is used in two ways.

With the help of OpenNI[ref name=”openni”]OpenNI Framework and Nitewww.openni.org[/ref] framework and NITE by primesense, which are integrated into Pure Data through my external pix_openni[ref name=”pix_openni”]pix_openni[/ref], a Skeleton representation of the dancer with 15 joints is available for measuring movements.

These movements are translated into sounds.

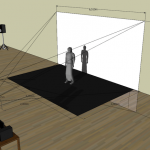

For the projection on the dancer’s body the region where the dancer moves is extracted through thresholding using OpenGL shader on the GPU. The resulting mask is filled with color (or various visual content) and projected back using Extended View Toolkit[ref name=”ev”]Extended View Toolkit[/ref] by Marian Weger and Peter Venus.

For the projection on the dancer’s body the region where the dancer moves is extracted through thresholding using OpenGL shader on the GPU. The resulting mask is filled with color (or various visual content) and projected back using Extended View Toolkit[ref name=”ev”]Extended View Toolkit[/ref] by Marian Weger and Peter Venus.

All software is developed using Pure Data[ref name=”pd”]pure data[/ref] and Gem on a Linux operating system. As well as custom developed externals and OpenGL shader.

Thanks for projection technology support to Marian Weger!!

Thanks for projection technology support to Marian Weger!!

Check out his performance: Monster

Hi, I’m a music teacher and composer, I’m learning Pd right now, I use linux most of the time. I work also as a part-time composer for a contemporary dance company, so I think your work is very interesting! Congratulations for ubersetzen vertimas. I’m working on similar things right now, hope we could exchange ideas! my mail: pdro74@hotmail.com